Sci-fi Primers: Remote Sensing - Part 2

The Science Behind Remote Sensing ("Ship Sensors")

The Science of Remote Sensing

In part 1, we introduced the topic of remote sensing—the act of perceiving something about an object from a distance.

Now, we’re going to explore an overview of the science relevant to remote sensing. This topic branches out into several fields of study, each of which could (and does) fill several textbooks, so today we’re only going to skim the surface.

The essential take-home message for artists hoping to flesh out their worlds is this: there is a hard limit on how much you can know about something happening over there, and that limit is determined by how you observe, and how long you keep observing.

In short, we’re talking about the limits of perception imposed by the laws of physics.

Physical Limits of Perception

There’s a fundamental limit on how much you can perceive about an object from a distance, given a finite amount of data being transmitted and collected.

There’s some subtlety to unpack here. First, perception can take many forms. We see visible light, snakes see infrared, and using complex machinery we can process any wavelength on the electromagnetic spectrum into an interpretable picture. We can also use sound, or gravity, or something else.

The limits on sensing something at a distance are described by four key areas:

The transit time of information crossing the gap between object and observer

The amount of information gathered (and how it’s gathered)

The observing medium

The physical laws of optics

First, let’s talk about propagation time.

Time Delay

This one is easiest to understand and the most consistently ignored.

A signal (which can be a beam of light, a radio transmission, or a yell) takes a finite time to cross a finite distance. The bigger the gap, the longer the time taken to cross it.

The fastest possible rate of information transfer is the speed of light, the universal speed limit. In everyday circumstances, we think of technology that relies on light or radio or other electromagnetic frequencies (e.g., the internet, telephones, GPS) as instantaneous. This is because these signals travel at the speed of light: 300,000 kilometres per second. Given that the circumference of the Earth is only 40,000 kilometres, this means that light can travel a distance equal to the Earth’s circumference in just over 0.1 seconds (in 1 second, it could travel 7.5 Earth circumferences). So, the delay is there, but it’s so small that we never notice.

This doesn’t apply when considering astronomical distances. In terms of the scale of a solar system or neighbouring planets, even travelling at 300,000 kilometres per second, a beam of light takes minutes or hours. For a two-way conversation, that figure has to double.

But this delay time is only part of the picture. We also need to think about how much information we can pack into a signal.

For that, we need a deeper dive into what information means.

Information Theory

The word “information” is used colloquially as an abstract term referring to meaning or patterns or relationships.

But in a field of study called Information Theory, information is a technical term with a well-defined meaning, intimately related to mathematical quantities (see part 6 for a deeper look at the maths). This is a type of conserved quantity in the universe. Nature has lots of these, some of which you’ll probably recognise: conservation of energy, conservation of momentum.

We are talking here about the conservation of information. At least on a quantum level, you cannot create or destroy information, only rearrange and redistribute it.

What about when I wipe a magnet over my hard drive? The data are obliterated! Actually, they’re just scrambled. It’s so difficult to recover them that it’s impractical, but in principle there’s nothing to stop you doing so.

Many people have heard about this in some fashion, but what often goes unappreciated is the inverse: while you can’t destroy information (even stuff you’d really rather be rid of), you also can’t create more information than you started with.

That is, you can’t get more information than your equipment gathered just by “being clever”. When you watch CSI and the agent accesses a GPS picture of the Earth and then, with a click of a button, zooms right down to a live feed of somebody getting out of their car?

Not only do we not have satellites anywhere near that fancy, but building something like that is not even possible in principle (even military satellites are more limited than in the movies). Aliens won’t be able to do it, either, not even super advanced ones. In practice, this kind of thing is achieved by having a dual-mode of monitoring: the high-res images of your car/house on Google Earth are taken by airplanes.

The same goes when a detective gets a fuzzy CCTV image of a criminal and brings it to a tech to be ‘enhanced’. The tech always presses one button and the image resolves into a 4K, crystal-clear photograph, including even the evil glint in the criminal’s eye.

Both are examples of getting something for nothing. And, unfortunately, the universe doesn’t allow that.

All of this comes down to the fact that the amount you can learn about something way over there depends on how much information you can pack into a transmission. If you don’t put something in at one end, there’s no way to extract it at the other end. Something you can try at home: convert a vector image like an .SVG, .PDF or .EPS (which contain all the information necessary to reconstruct the image at any scale) to .JPG (which compresses the file size by cheating and only storing a piece of the information). You’ll find that if you blow up the original image it looks clear, but the JPG version looks blurry—because the JPG version doesn’t contain all the information, and so there’s no way of retrieving the original high-fidelity data.

N.B. Information as described here shouldn’t be confused with general meaning. Having a huge amount of information doesn’t necessary translate to huge knowledge. You could fill all the hard drives on Earth with observations of goings on in outer space, but without the expertise to process those data, the result is zero knowledge gained. Conversely, you could take a photograph of a crowd of people and draw a circle around a person of interest. The data on display are the same, but the relative meaning is more significant.

Observing Medium

What you use to carry the information also matters. From everyday experience, we have visible light and sound waves. They’re both methods of remote sensing that our bodies possess by use of specialised equipment: our eyes and our ears.

It’s no surprise that light and sound obey very different rules. Light travels faster than sound. Light can travel through a vacuum, while sound cannot. Light can be modulated to encode far more information than sound, for reasons we won’t get in to (see: lasers). These contrasts emerge because light and sound waves travel through different media: the electromagnetic field and a compression of air molecules, respectively.

The same logic applies to any two media. Each medium has its own characteristics, limits and uses. Crucially for our discussion, different media carry different amounts of information and can do so at a different speeds.

For almost all practical purposes, contemporary technology relies on some form of electromagnetic radiation for remote sensing.

Electromagnetic (EM) radiation sounds like a mouthful of nerd, but it’s really just a form of ripple, analogous to a wave travelling along a piece of string. Instead of a piece of string wobbling, it’s little pairs of electric and magnetic fields.

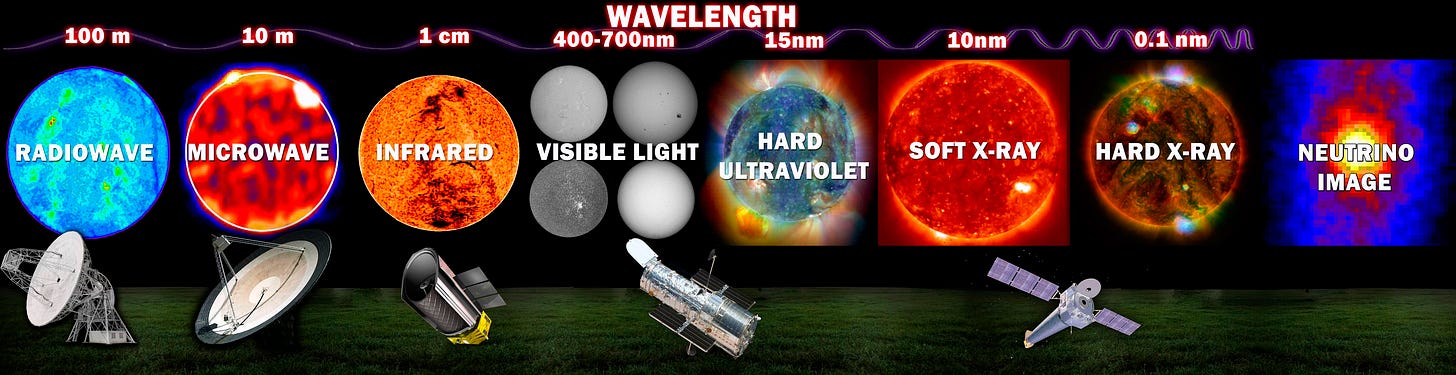

Carrying on the string metaphor, we know that if we wiggle a piece of string slowly, we get slow ripples; we wiggle fast, the string wiggles fast. We call that “rate of ripple” the frequency. We hear different frequencies of sound as different pitches. EM radiation also has an associated frequency, and it turns out that EM radiation at low frequencies has very different behaviour to EM at high frequencies—so we call them by different names. Radio waves are lowest in frequency, gamma waves are highest in frequency, and microwave, infrared, visible light, ultraviolet and X-rays are somewhere between. But, under the jargon, it’s all just little electric and magnetic fields rippling at different rates. Together, we call the full span of frequencies the EM spectrum, and each part is useful for specific things.

The Laws of Optics

Given that most contemporary technology (and most posited near-future technology) relies on EM radiation, we need to talk about the scientific theory underpinning its behaviour: optical physics.

Absorption & Transmission

One key aspect in how different parts of the EM spectrum differ is how much they get absorbed when something is in their way. You can’t see through your hand, but if you cover your phone (which uses microwaves) with your hand then you can still send a text. So, microwaves are absorbed less by human flesh and bone than light.

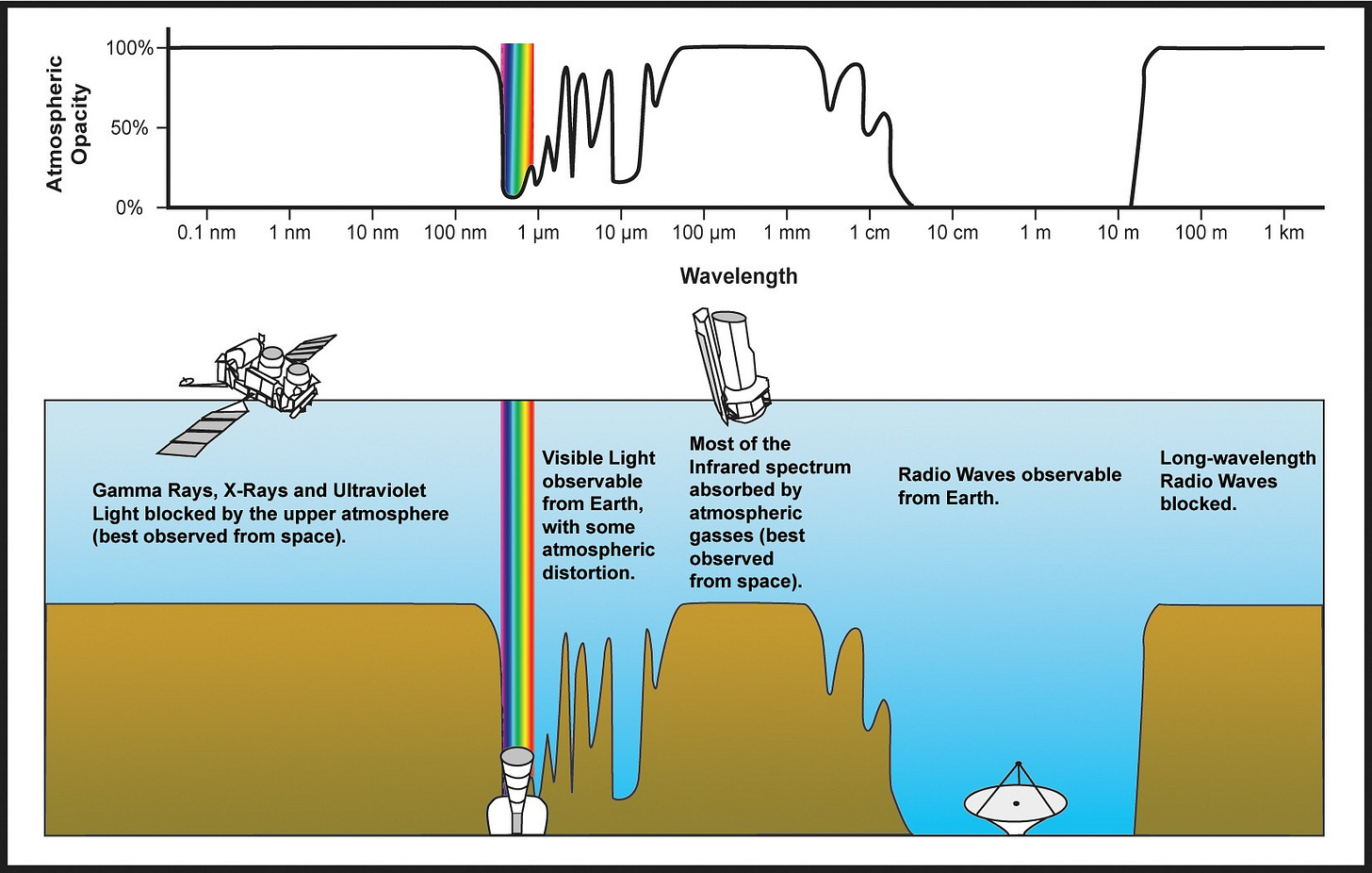

Above is a graph (sorry) showing how much different parts of the spectrum can punch through Earth’s atmosphere. We have, from left to right: gamma, X-rays, ultraviolet (UV), visible light, infrared (IR), microwaves and radio waves. Scoring 100% means nothing gets through (100% absorbed by the air between the ground and space), and scoring 0% means everything gets through (the air doesn’t interact with it at all).

So, at a glance, we can see many things:

We’re not all dead from harmful gamma/X-rays/UV radiation from outer space, because the atmosphere absorbs it all

We don’t all freeze to death because the atmosphere absorbs a lot of the infrared radiation (which we perceive as heat) and cocoons us in a warm blanket of air

Radio and microwaves are a great way to communicate, especially with satellites, because the atmosphere doesn’t attenuate the signal, so signals can travel over vast distances

We have eyes that operate in the visual light part of the spectrum, because the atmosphere is transparent to incoming light from the sun (also because most of the Sun’s EM emissions are in this range).

The diverse behaviour of different parts of the spectrum can be used to great effect when studying objects in space. Above are pictures of the Sun measured using different parts of the spectrum. Because of their different absorption properties, we can image different layers of the Sun or structures on its surface.

N.B. For a really cool live demonstration of this using the Solar Dynamics Observatory (SDO) spacecraft, which is orbiting the Sun, take a look here.

Observation Time

The amount of time spent gathering observations also matters. As an amateur astronomy photographer will know, a long exposure time is needed to gather enough light for an image with good contrast.

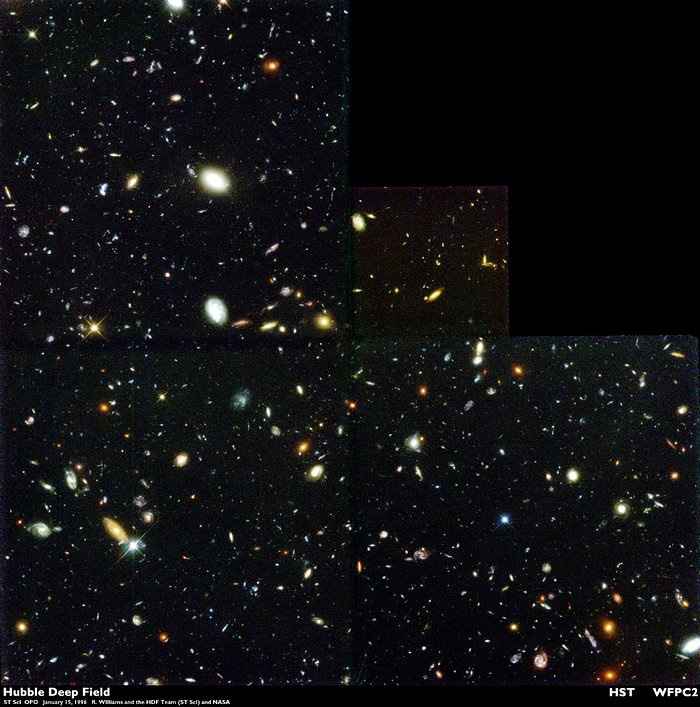

The same goes for any observations of faint light sources. For instance, the Hubble Telescope famously produced the Deep Field Image from a patch of night sky about as large as a fingernail held out at arm’s length.

But this wasn’t a snapshot. It was built up over 10 days of exposure.

The crux of this is that long-range sensors won’t offer an image right away. There will be a fixed integration time required to collect enough data.

Image Resolution

Imagine a super-duper set of spectacles that gave you 20-20 vision. To you, it would look like you see everything. But you can’t. It’s not physically possible to get an infinitely resolved image, because of something called the diffraction limit.

For example: look at an object nearby. Note all its details, every surface imperfection, its colouration, the reflections. Easy enough.

Now note the inter-atomic separation of the material it’s made of. You likely can’t—if you can, call a lab or something, they’re going to want to dissect you immediately. You can’t do it because your eyes are physical structures that have physical limits; they operate within a range of parameters that allow you to see things of a certain size and in a given level of detail. The details of atoms are outside that range.

Other species, however, have eyes structured differently to ours. Some, such as birds of prey, have very large eyes relative to their body mass, and have a large portion of their brains given over to visual processing. Bigger eyes and a different internal structure allow them to gain larger resolution—they can see small things from farther away.

The take-home message here is that the size of the information-gathering apparatus is a key limiting factor. To observe disparate parts of the universe, space telescope need enormous mirrors several metres across, fine-tuned to the micrometer. To build a picture, they need to observe a given region with great precision and do so for a long time.

How Can I Ignore Optical Limits?

We can get around optical limitations by using a different medium. Several have been posited, including gravity waves, neutrino bursts, and more exotic but vague things like “hyperspace comms”. We’ll explore more about these in the following section.

That concludes part 2. In part 3, we’ll explore some of the common mistakes and tropes of remote sensing in sci-fi.